Product

Grapple

A semantic code compiler for LLMs

Preview

C#

TypeScript

CSS

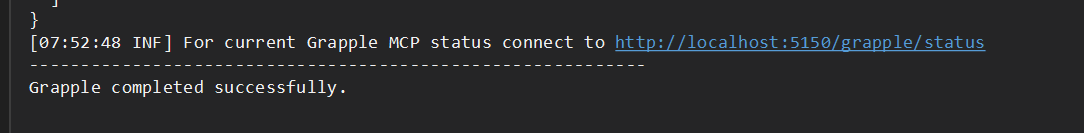

MCP

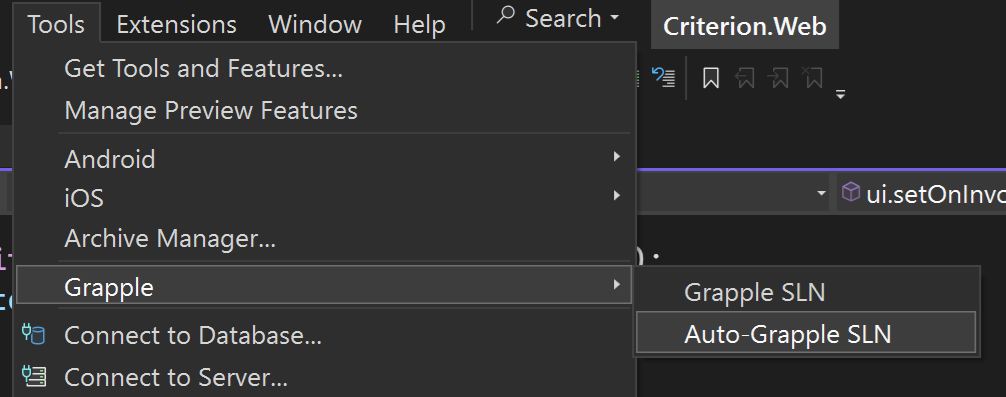

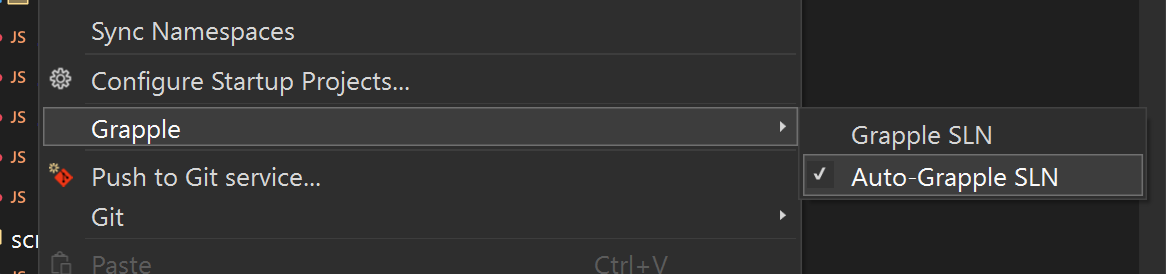

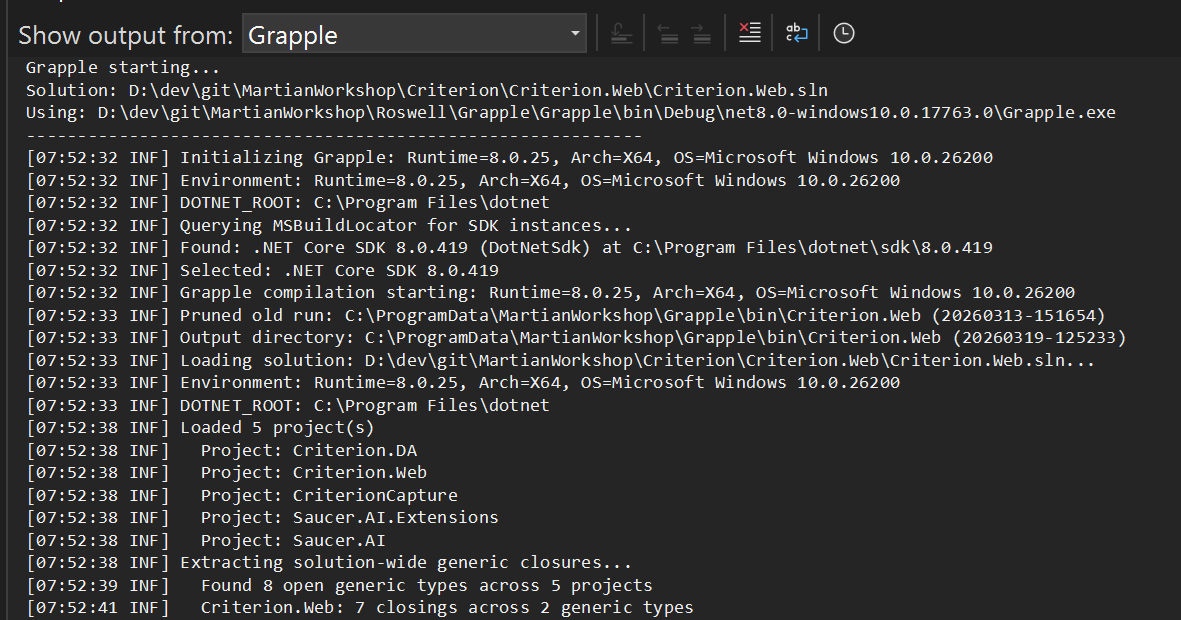

Visual Studio

LLMs are effective code generation tools. A focused LLM is very effective and even a joy to use. But ramp up time and context bloat means the model may struggle to understand large codebases, dependencies, and the magic window may be short lived. Especially in longer sessions or when work is done across project boundaries.

Recycling sessions, managing multiple agents, and creating markdown files help to mitigate context bloat and hallucinations, but there is still the ramp up time and overhead that may be better addressed another way. The Grapple compiler addresses this and more by creating semantic mappings of your source code.

Language

C#

Classes, interfaces, methods, properties, enums, structs, records, delegates, inheritance chains, attributes, public callsites, and cross-project dependencies.

Language

TypeScript

Classes, interfaces, enums, type aliases, functions, methods, imports, and module structure.

Language

CSS

Selectors, custom properties, at-rules, class definitions, design tokens, and dead CSS detection by cross-referencing with markup and scripts.

Note: Inter-project dependencies and NuGet/npm packages are also captured for every solution and project.